This LCA was driven by two factors. Externally, there’s growing scrutiny around AI’s carbon footprint and legitimate questions about the environmental cost of large language models. Internally, it aligned with our Climate Compass 2025 pledge and our commitment to transparency.

The study followed standard LCA frameworks adapted for digital services, using ADEME’s Product Category Rule for cloud services as our methodological foundation. This rule provides a recognized industry standard, ensuring our results are consistent, comparable, and credible with auditors and sustainability teams.

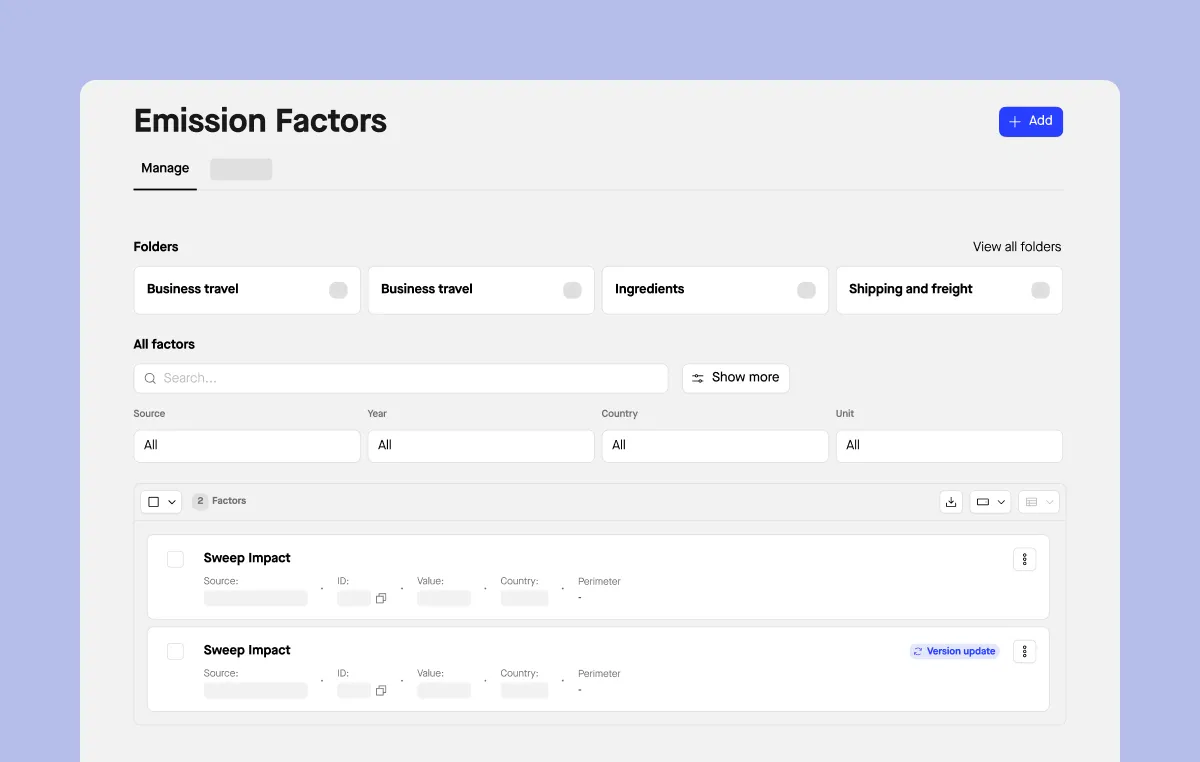

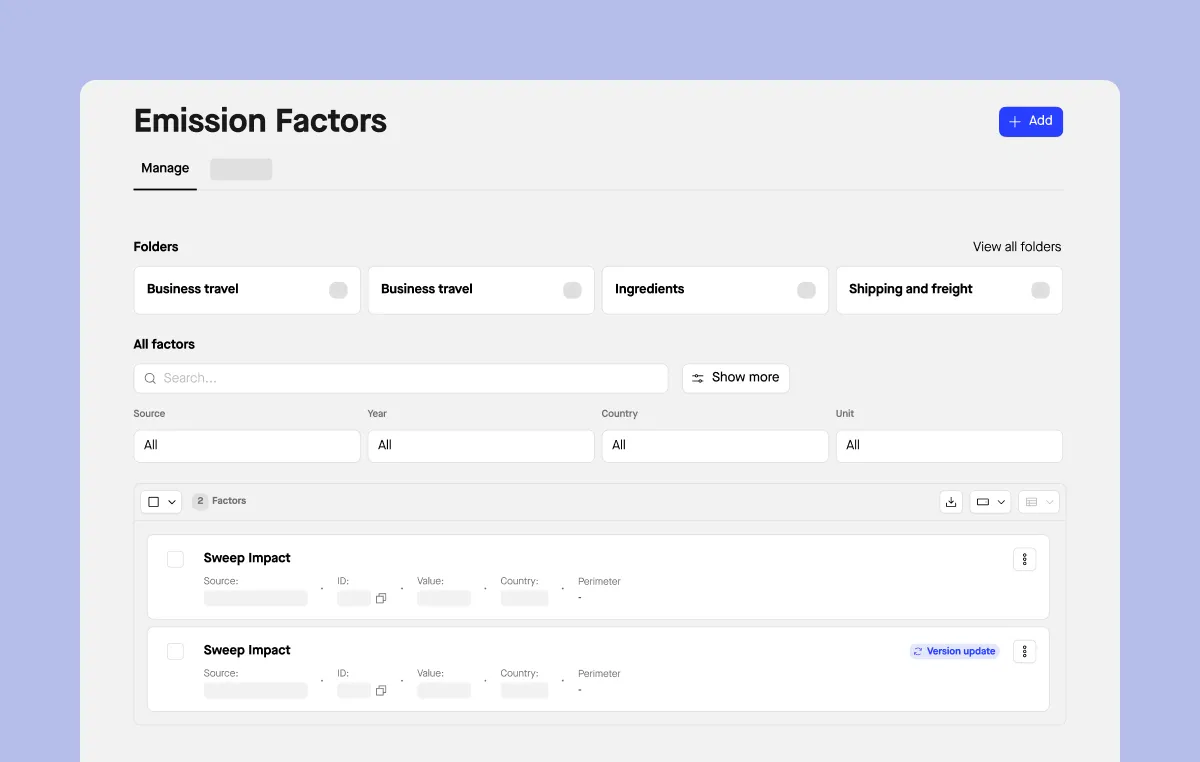

What we measured and how

We followed a cradle-to-gate approach, focusing on our cloud infrastructure: Amazon Web Services for computing and AI workloads, and Snowflake for data storage and analytics. These represent where digital emissions actually occur – servers running continuously in energy-intensive data centers.

The process involved defining clear boundaries, collecting emissions and energy data from our infrastructure providers, converting that data into CO₂ equivalent using relevant emission factors, and calculating impact per functional unit. The entire study underwent structured internal review covering scope, data quality, and interpretation.

Defining the boundaries

User devices and data transmission were excluded, not arbitrarily, but because reliable data wasn’t available at the granularity we needed. Our guiding principle was clear: if we can’t measure it properly, we don’t estimate it. Including poor-quality data would undermine the credibility of the results we do have confidence in. This is standard LCA practice: focus on what’s significant and measurable.

We concentrated on AWS and Snowflake because they represent the largest share of operational spend, and cost is a reliable proxy for environmental significance. Other tools like GitHub, Dust, Datadog, or Slack were excluded because their emissions data isn’t publicly available at the level of detail we required.

Two metrics for two purposes

Because Sweep does two distinct things, we selected two functional units. The first – kg CO₂e per measurement – reflects core platform usage: uploading or submitting a sustainability data entry. The second – kg CO₂e per Sweepy credit – isolates the AI layer and lets us benchmark against other large language models.

This dual approach enables different conversations with different audiences: one about platform efficiency, one about AI sustainability. For customers, this means they can now account for Sweep’s platform in their own value chain emissions reporting, specifically within purchased software. The Sweepy credit metric lets them understand the environmental cost of their AI-assisted workflows.